Modern models, directly in your browser

Edge AI is a concept of deploying AI models to the devices that produce the data. It can be surveillance cameras placed in remote areas, radiology equipment in medical centers, or even your laptop. There are multiple benefits from that:

- Security. When running inference on the device, we ensure that the data is not compromised on its way to the remote server or the server itself. This is especially important when working with medical data or confidential information.

- Latency. Even though edge devices are less computationally performant than data centers packed with high-end hardware, sending the raw data from the device may be either slow or unavailable. Examples of this include agriculture and microscopy.

- Cost savings. When models are deployed at the devices, you, as a service provider, no longer need to run expensive infrastructure to support all your users. You can scale at virtually no cost and put more computational resources towards developing better models for business needs.

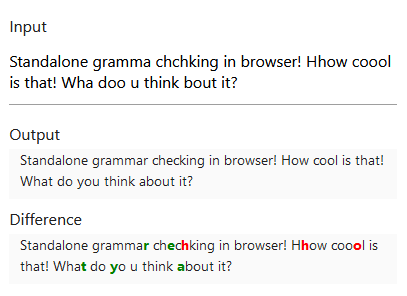

With the recent advances in deep learning and MLOps, it becomes easier to create ML-powered products and deploy them to edge devices. This project explores how well deep learning models work in the most common edge software - your browser. All demos on this site are built using JavaScript and work in any modern browser - desktop and mobile.

The foundation of the project is ONNX Runtime Web - the framework that allows running deep learning models in ONNX format in the browser. I used pre-trained and fine-tuned models from the Hugging Face hub. Thanks to PyTorch's built-in functionality of exporting models to ONNX and projects like Optimum and fastT5, it is straightforward to convert models and start using them on the web.